Applying machine learning techniques to cyber security has become popular nowadays. The majority of existing work aim to produce results that achieve accuracy, f1 score and precision rates close to 100%, without taking into account problems such as adversarial samples. In our work, “Shallow Security: on the Creation of Adversarial Variants to Evade ML-Based Malware Detectors”, published at Reversing and Offensive-Oriented Trends Symposium 2019 (ROOTS), we present a series of experiments that were used to create adversarial malware in the Machine Learning Static Evasion Competition, were we were able to finish among the top-scorers. The conference took place in Vienna, Austria, from 28th to 29th November 2019. Check our paper here.

Abstract

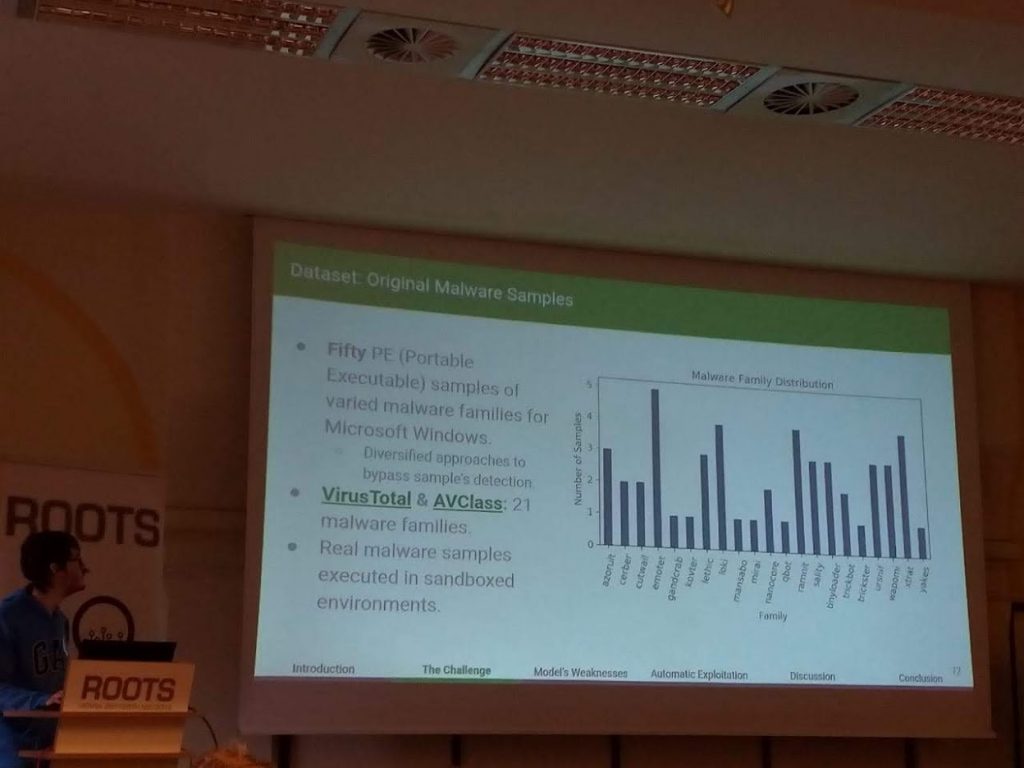

The use of Machine Learning (ML) techniques for malware detection has been a trend in the last two decades. More recently, researchers started to investigate adversarial approaches to bypass these ML-based malware detectors. Adversarial attacks became so popular that a large Internet company has launched a public challenge to encourage researchers to bypass their (three) ML-based static malware detectors. Our research group teamed to participate in this challenge in August/2019, accomplishing the bypass of all 150 tests proposed by the company. To do so, we implemented an automatic exploitation method which moves the original malware binary sections to resources and includes new chunks of data to it to create adversarial samples that not only bypassed their ML detectors, but also real AV engines as well (with a lower detection rate than the original samples). In this paper, we detail our methodological approach to overcome the challenge and report our findings. With these results, we expect to contribute with the community and provide better understanding on ML-based detectors weaknesses. We also pinpoint future research directions toward the development of more robust malware detectors against adversarial machine learning.

BibTeX (Download)

@inproceedings{10.1145/3375894.3375898,

title = {Shallow Security: On the Creation of Adversarial Variants to Evade Machine Learning-Based Malware Detectors},

author = {Fabrício Ceschin and Marcus Botacin and Heitor Murilo Gomes and Luiz S Oliveira and André Grégio},

url = {https://doi.org/10.1145/3375894.3375898

https://secret.inf.ufpr.br/papers/roots_shallow.pdf},

doi = {10.1145/3375894.3375898},

isbn = {9781450377751},

year = {2019},

date = {2019-11-28},

booktitle = {Proceedings of the 3rd Reversing and Offensive-Oriented Trends Symposium},

publisher = {Association for Computing Machinery},

address = {Vienna, Austria},

series = {ROOTS’19},

abstract = {The use of Machine Learning (ML) techniques for malware detection has been a trend in the last two decades. More recently, researchers started to investigate adversarial approaches to bypass these ML-based malware detectors. Adversarial attacks became so popular that a large Internet company has launched a public challenge to encourage researchers to bypass their (three) ML-based static malware detectors. Our research group teamed to participate in this challenge in August/2019, accomplishing the bypass of all 150 tests proposed by the company. To do so, we implemented an automatic exploitation method which moves the original malware binary sections to resources and includes new chunks of data to it to create adversarial samples that not only bypassed their ML detectors, but also real AV engines as well (with a lower detection rate than the original samples). In this paper, we detail our methodological approach to overcome the challenge and report our findings. With these results, we expect to contribute with the community and provide better understanding on ML-based detectors weaknesses. We also pinpoint future research directions toward the development of more robust malware detectors against adversarial machine learning.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}